Visual grounding

Using the source input as ground truth will help trust the system and makes it easy to interpret its process and what might have gone wrong.

When checking data, I want to be able to see how the system arrived at its answer, so I can trust the data and identify any potential errors in the process.

- AI Transparency and Explainability: Make AI systems transparent and understandable by explaining how and why decisions are made.

- Multimodal Context: In this example we used the context of an image of a receipt, but it can also include other modalities, such as audio.

More of the Witlist

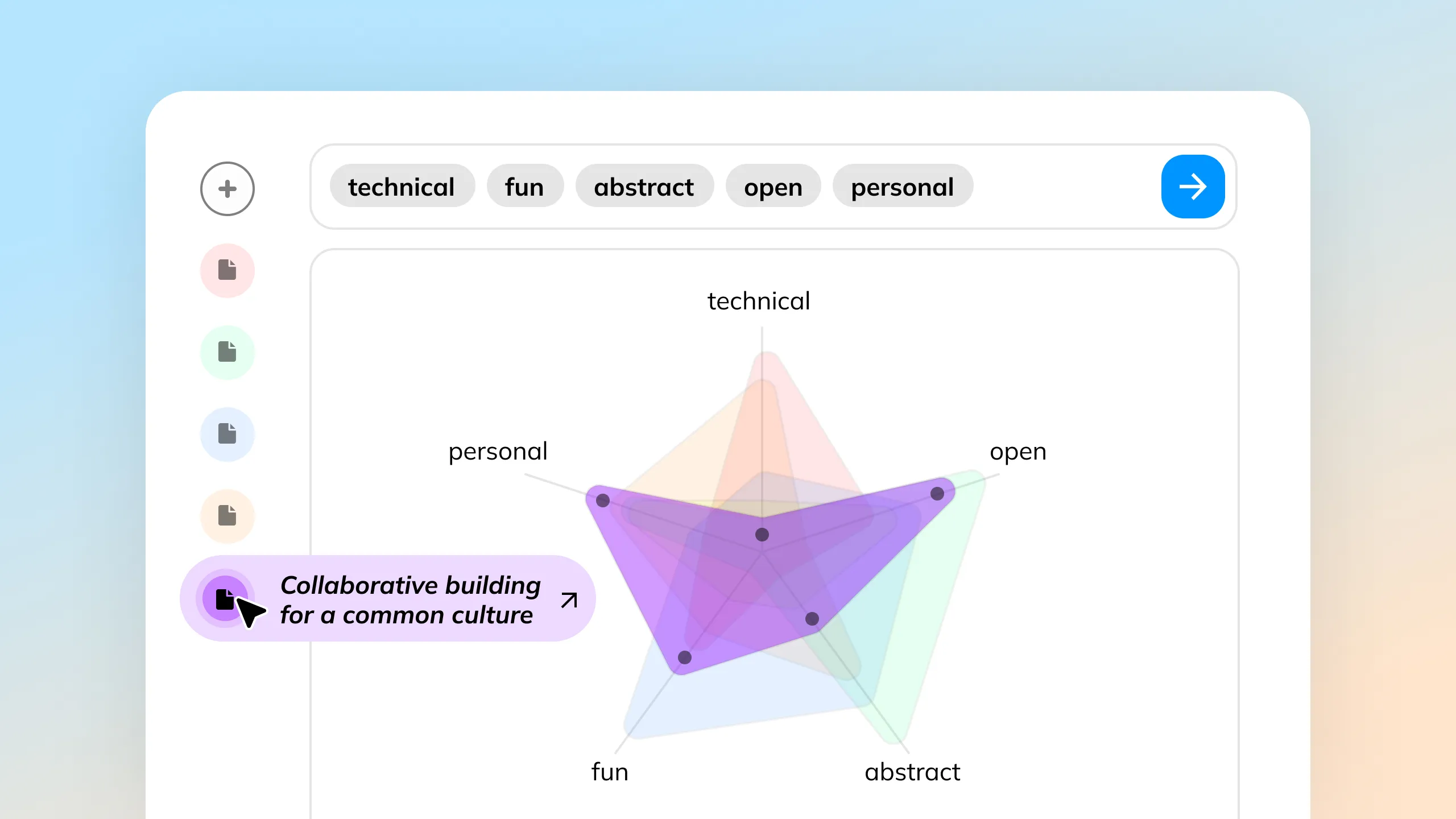

Input design concepts in small bits and see the cumulative output in real-time. Explore different combinations and immediately visualize the results, making the creative process interactive and flexible.

AI collaboration agents can act as writing partners that assist people by enhancing their content through transparent, easily understandable suggestions, while respecting the original input.

Textual information often misses intuitive cues for understanding relationships between ideas. AI can clarify these connections, making complex information easier to grasp quickly.

Presenting multiple outputs helps users explore and identify their preferences and provides valuable insights into their choices, even enabling user feedback for model improvement.

AI can enhance live chat streams by analyzing real-time data, identifying trends, and driving interactive elements like voting to boost audience engagement.

Comprehend and compare large documents by visualizing embeddings and their scores, enabling a clear and concise understanding of vast data sources in a single, intuitive visualization.